✨ DeepSeek-V4 is here — a million-token context, 1.6T parameter powerhouse optimized for agentic workflows. Out of the box, on DeepSeek-V4-Pro, NVIDIA Blackwell Ultra delivers over 150 TPS/user interactivity for agentic workflows. And we’re just getting started. Expect these performance figures to climb higher as we implement Dynamo, NVFP4, and advanced parallelization techniques. Start building today with @lmsysorg and @vllm_project

NVIDIA Blackwell Ultra Powers DeepSeek V4 Pro at 150 Tokens Per Second

NVIDIA

NVIDIANVIDIA reported that DeepSeek-V4-Pro achieves over 150 tokens per second on Blackwell Ultra hardware. This performance level makes 1.6-trillion parameter models viable for real-time autonomous agents. Future software updates like Dynamo and NVFP4 are expected to push these speeds even higher.

TPS) for user interactivity using the vLLM inference framework (the process of running a trained model).- Model

- DeepSeek-V4-Pro

- Parameters

- 1.6 trillion

- Context window

- 1 million tokens

- Hardware

- NVIDIA Blackwell Ultra

- Throughput

- 150+ tokens per second

- Software support

- vLLM, LMSYS

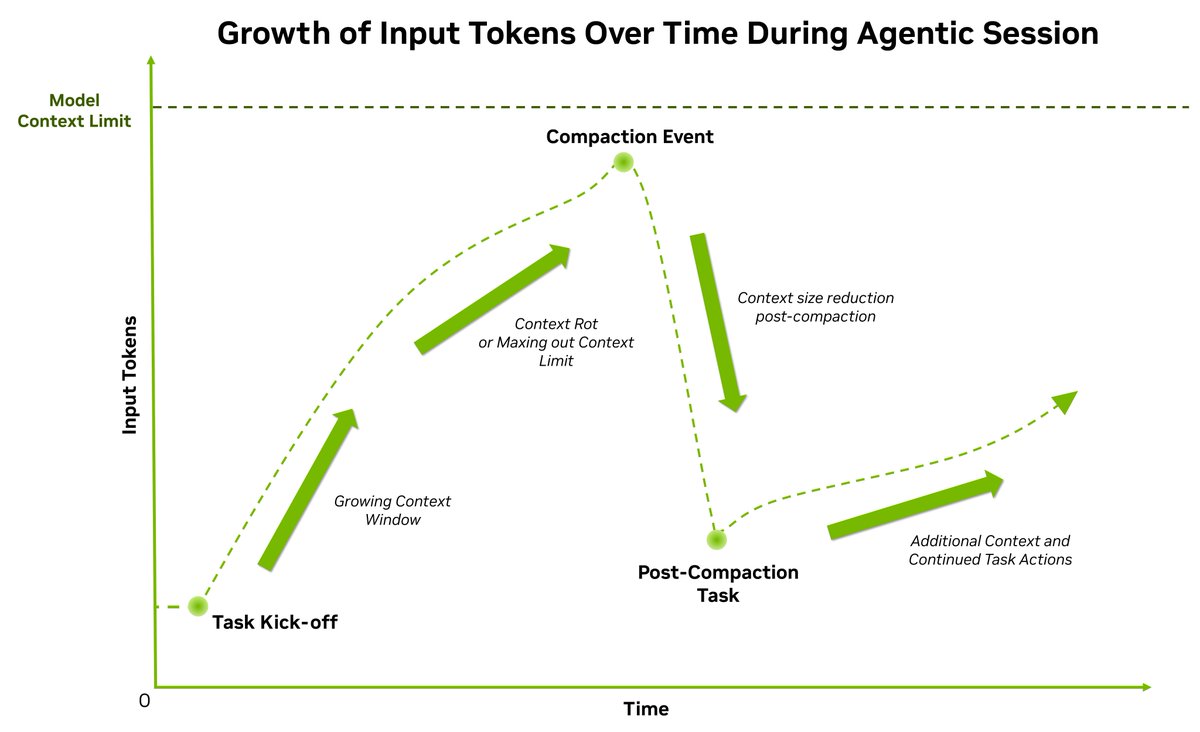

High-speed inference is the primary bottleneck for agentic workflows requiring multiple reasoning steps. Achieving 150 TPS on a model of this scale proves that massive Mixture-of-Experts architectures can power responsive, autonomous systems. This performance level is part of a broader shift toward day-zero support for frontier models in open inference frameworks.

You can start building with these models today through the LMSYS and vLLM projects. Performance will increase as NVIDIA extends its software stack with Dynamo 1.0 and NVFP4 (a 4-bit floating-point format). These optimizations will further reduce the compute overhead for the model's million-token context window.

Still wondering? A few quick answers below.

Every HeadsUpAI update is written based on its original source and reviewed before it's published. Read our editorial standards →