MiMo-V2.5 series from @XiaomiMiMo is live now on OpenRouter! Both V2.5 and V2.5-Pro see improvements over V2-Pro and V2-Omni, with a focus on long running agent tasks and coding ability. They also both launch with 1 million context, and are extremely token efficient. https://t.co/FQUluEFLb7

Xiaomi Launches MiMo-V2.5 Series With 1M Context and Reasoning Tokens

· Updated

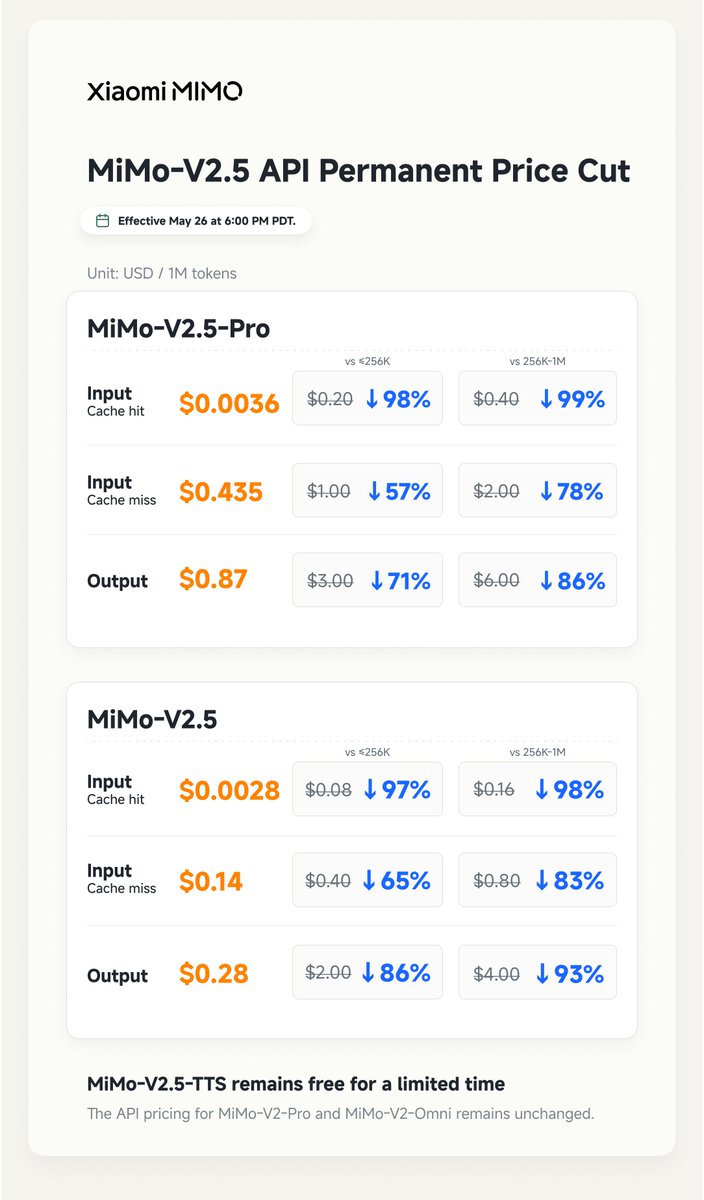

Xiaomi released the MiMo-V2.5 series on OpenRouter, featuring a 1 million token context window and native multimodal support for image and video tasks. The models are specifically architected for long-horizon agentic workflows and coding, offering reasoning-enabled thinking tokens to improve task stability. By delivering pro-level performance at roughly half the typical inference cost, these models lower the economic barrier for deploying autonomous agents at scale.

- Context window

- 1,048,576 tokens

- Max output tokens

- 131,072 tokens

- Pricing (input)

- $0.40 per million tokens

- Pricing (output)

- $2.00 per million tokens

- Modality

- Native omnimodal (text, image, video)

- Availability

- OpenRouter API

This release extends the availability of Xiaomi's agent-centric models as developers shift toward long-running autonomous workflows. By optimizing for agentic performance and coding stability, the series addresses reliability issues in multi-step tasks. It follows a trend of optimizing models specifically for agent pipelines.

You can integrate mimo-v2.5 into agent frameworks to handle massive codebases in a single pass. The model is available via the OpenRouter API at $0.40 per million input tokens and $2 per million output tokens. To use reasoning, enable the reasoning parameter and preserve the reasoning_details array.

Still wondering? A few quick answers below.

Every HeadsUpAI update is written based on its original source and reviewed before it's published. Read our editorial standards →