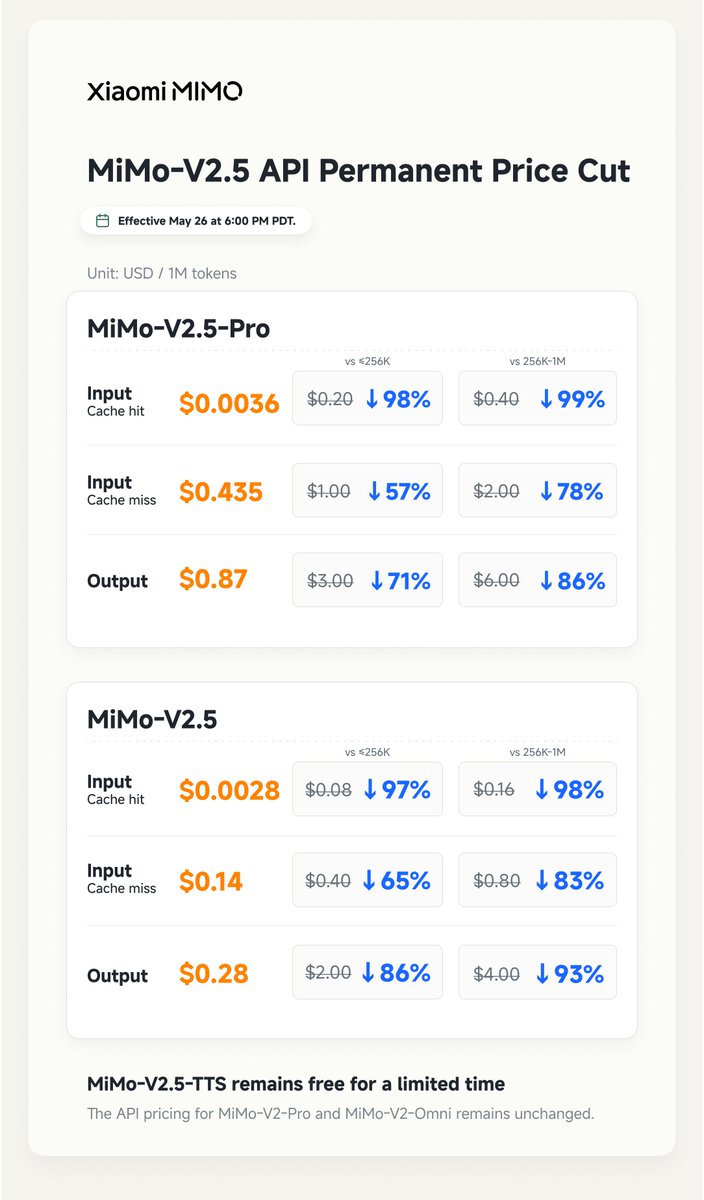

MiMo v2.5 + MiMo v2.5 Pro now available in Go • v2.5 → multimodal • v2.5 Pro → built for coding price unchanged

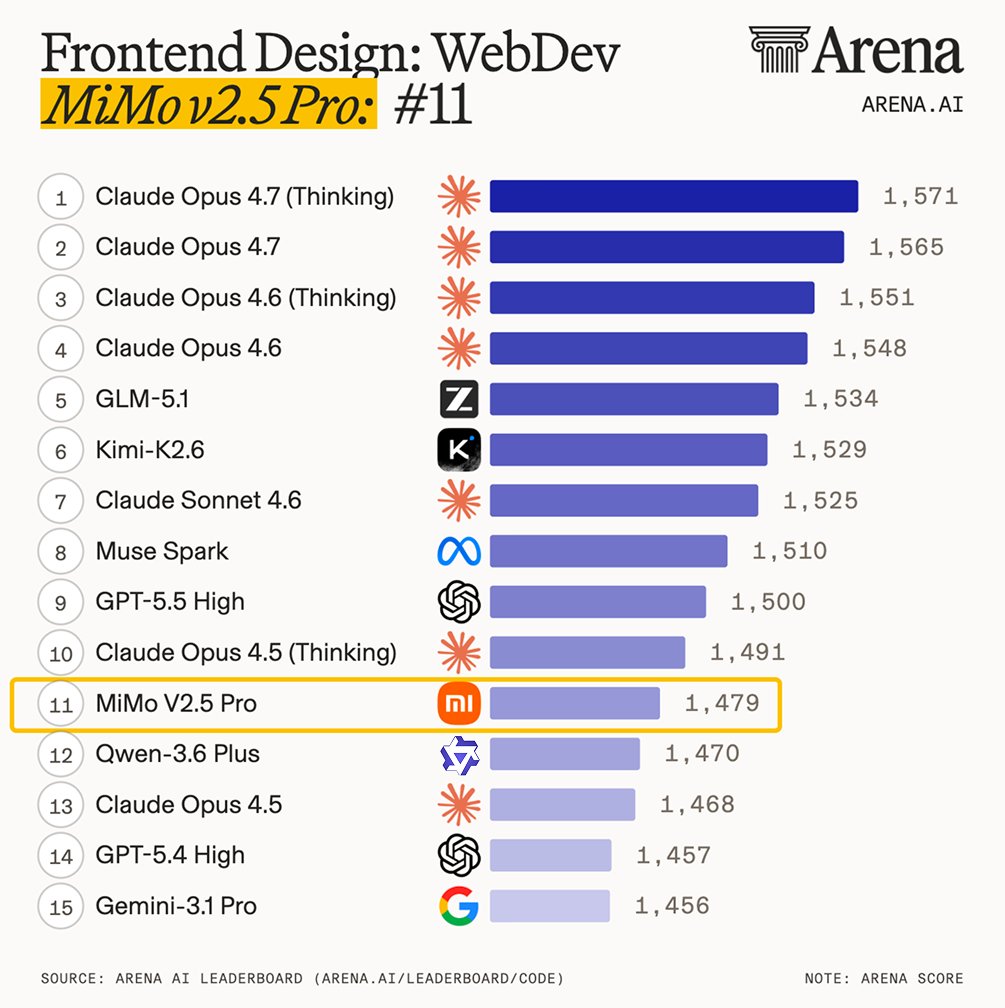

OpenCode Adds Xiaomi MiMo v2.5 Models to Go for Agentic Coding

OpenCode integrated Xiaomi's MiMo v2.5 and v2.5 Pro models into its Go platform, offering native multimodality and specialized coding intelligence. These agent-centric models provide a 1-million-token context window for complex engineering tasks at the same price point as previous versions.

- Context window

- 1M tokens

- Modality

- Native multimodal

- Specialization

- Coding (v2.5 Pro)

- Pricing

- Unchanged from previous Go rates

- Availability

- OpenCode Go platform

- Supported inputs

- Text, images, video, audio

The v2.5 series is designed for agent-centric (systems that plan and execute tasks independently) workloads requiring reasoning across long-chain tasks. By providing a specialized coding variant in the v2.5 Pro, OpenCode offers a high-performance alternative for engineering that follows the addition of low-cost reasoning models.

Access both models immediately through the OpenCode Go interface or API with no change to existing pricing. The v2.5 Pro is optimized for complex coding, while the standard v2.5 supports multimodal inputs. This release builds on the recent integration of high-speed coding agents.

Still wondering? A few quick answers below.

Every HeadsUpAI update is written based on its original source and reviewed before it's published. Read our editorial standards →