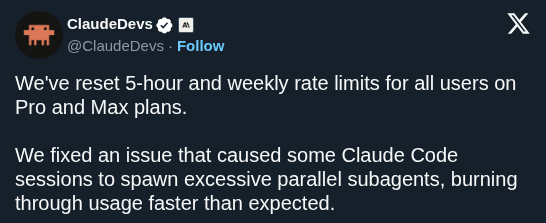

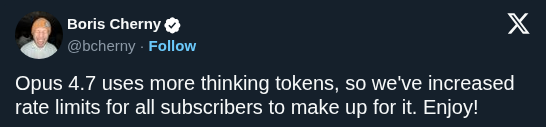

Over the past month, some of you reported Claude Code's quality had slipped. We investigated, and published a post-mortem on the three issues we found. All are fixed in v2.1.116+ and we’ve reset usage limits for all subscribers.

Anthropic fixes Claude Code quality regressions and resets usage limits for subscribers

· Updated

Anthropic released a post-mortem identifying three issues in the Claude Code and Agent SDK harness that caused recent quality slips. While the underlying models remained stable, the update highlights how orchestration layers can degrade performance even when the AI itself is unchanged.

2.1.116 or later.This regression confirms that reports of models getting dumber are often rooted in implementation rather than weights. Because Claude Code follows the same agentic architecture as other Anthropic tools, the slip also impacted Cowork. The fix restores the high-speed navigation and reasoning capabilities users expect.

Subscribers should update immediately to benefit from the fixes and a reset of their usage limits. The stabilization follows recent ecosystem expansions like external application triggers for Claude Desktop. To prevent future regressions, Anthropic is expanding its internal evaluation suites and dogfooding configurations that mirror user setups.

Still wondering? A few quick answers below.

Every HeadsUpAI update is written based on its original source and reviewed before it's published. Read our editorial standards →